- Have any questions?

- info@shradhakanwar.com

Are We Outsourcing our Thinking?

The Great Re-Imagineering

March 18, 2026Leveraging Social Media in Education

March 18, 2026Are We Outsourcing our Thinking?

Let me ask you something.

When was the last time you read 10 pages — uninterrupted, unmediated by a scroll bar, unpunctuated by a notification, and devoid of a “TL;DR” summary waiting at the bottom?

If you paused to think, that pause is the answer.

If that question induced a flicker of cognitive dissonance, you’ve just encountered the “patience of the brain” reaching its vanishing point.

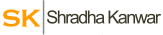

Building (and Demolishing) the Reading Brain

Cognitive scientist Maryanne Wolf, in her seminal work Reader, Come Home, reminds us that the reading brain is not a biological inheritance; it is a hard-won cultural acquisition. We are not “wired” to read; we are rewired by the act of reading.

When we engage in deep, sustained immersion, we aren’t merely downloading data; we are conducting “pre-frontal cortex calisthenics.” We forge inferences, cultivate empathy, and build the mental stamina required for sophisticated ethical reasoning.

Conversely, when we surrender to the “skimming-as-default” mode, we are effectively performing a self-directed lobotomy on our capacity for nuance. By offloading the “heavy lifting” of synthesis to a chatbot, we aren’t just saving time—we are evaporating the very neural scaffolding required to grasp the world, rather than just label it.

The Impressionable Mind

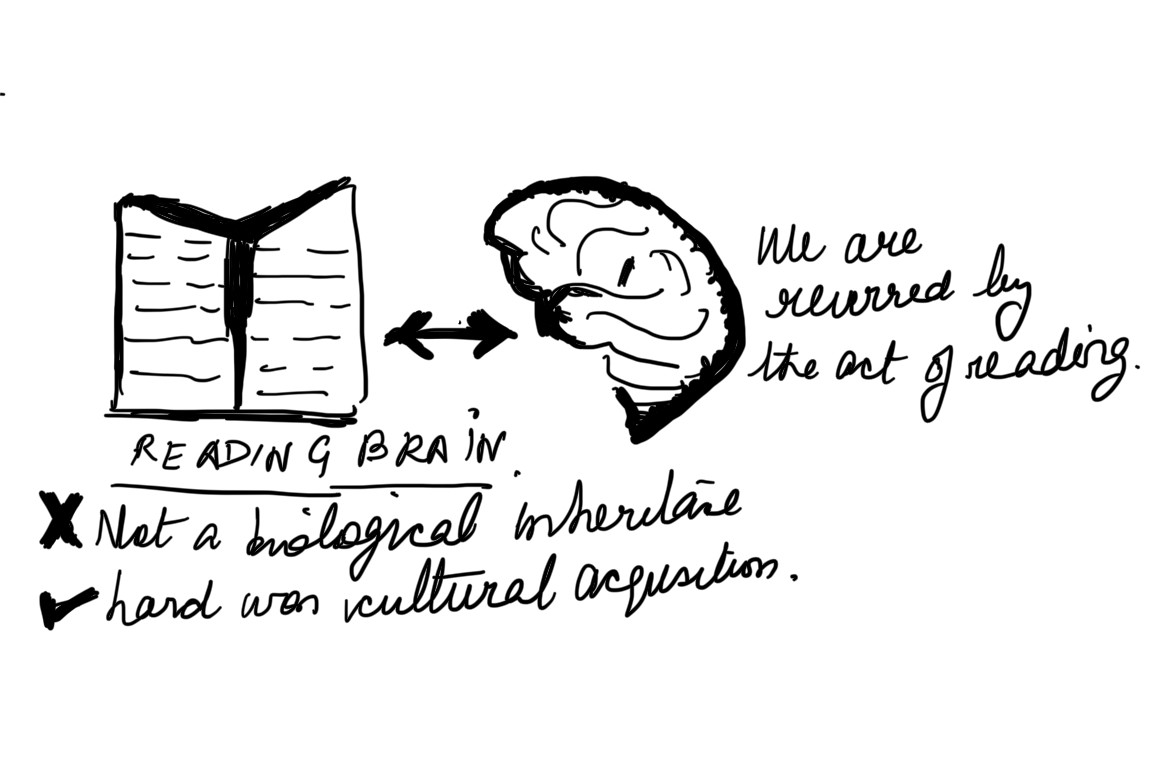

Daniel Kahneman (author of Thinking, Fast and Slow) would likely view our current AI-dependency as a catastrophic victory for System 1. AI is the ultimate “System 1” engine: it provides answers with a terrifying, effortless confidence that mimics gut instinct.

This creates a state of “cognitive ease”—a psychological comfort zone where, because the information is easy to process, our brains erroneously assume it is inherently true. The danger is not that AI provides “facts,” but that it provides a “feeling of knowing” that bypasses our critical filters entirely.

When we treat an algorithmic output as a conclusion rather than a hypothesis, we allow our System 2—the slow, metabolically expensive, analytical machinery responsible for skepticism—to quietly atrophy. Just as a muscle withers without resistance, our capacity for rigorous inquiry weakens when we stop wrestling with the “friction” of ambiguity. We are becoming “efficiently wrong.”

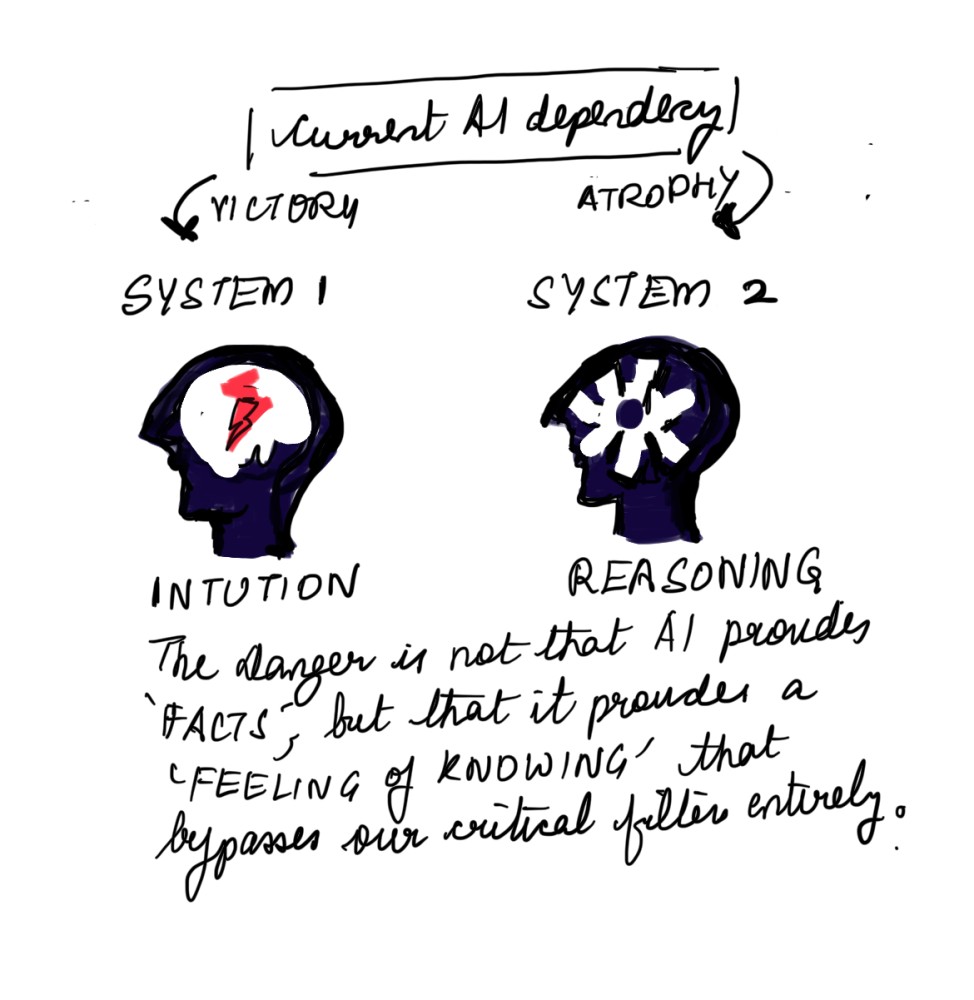

The Rise of the “Hollow Expert”

A 2023 MIT Sloan / BCG study revealed a “jagged frontier” of skill: knowledge workers using AI showed marked improvement in speed, but a simultaneous decline in independent problem-solving. Is it possible that we are inadvertently training a cohort of “Hollow Experts”:

- The Prompt-Smith vs. The Arguer: They can manipulate a model to get a result but cannot defend the structural integrity of the logic behind it.

- Retrieval vs. Reflection: They can find anything, yet understand nothing of the “intellectual provenance” of the ideas they curate.

- Generation vs. Origination: They can remix the statistical likelihoods of a training set but lack the “intellectual grit” to produce a truly novel insight.

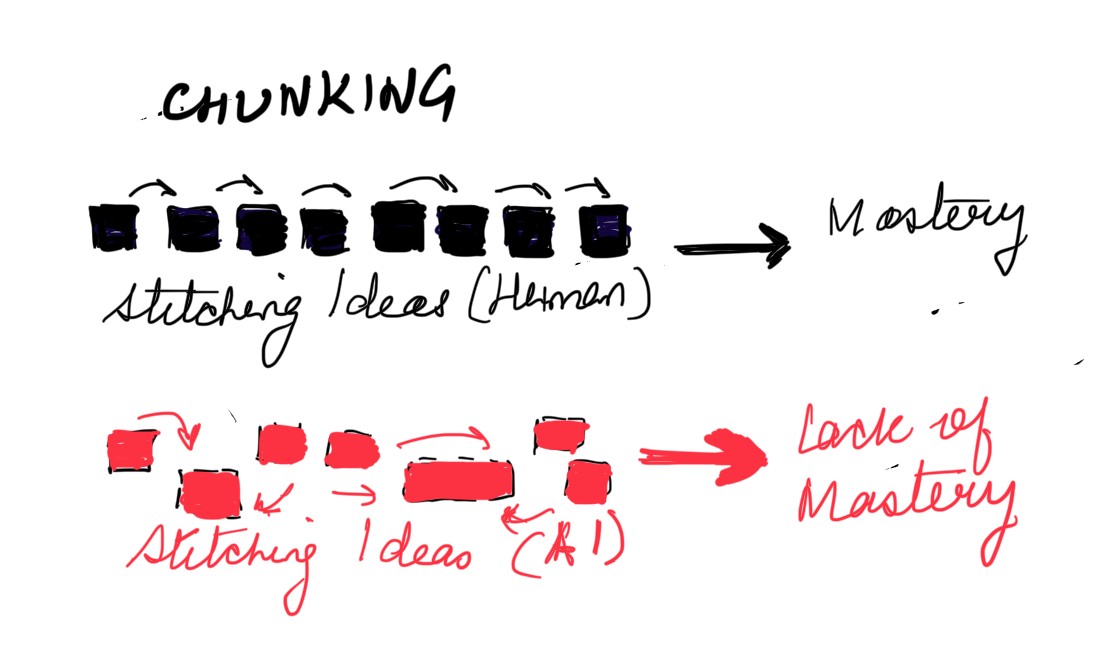

Barbara Oakley , a titan in the field of learning science (Learning How to Learn), emphasizes the necessity of “chunking”—the process of stitching ideas together through focused, effortful practice. If we let AI perform the “chunking” for us, we never build the neural “synaptic strengthening” necessary for mastery. We aren’t learning; we are merely observing a machine learn on our behalf.

From “Pancake People” to “Mirror People”

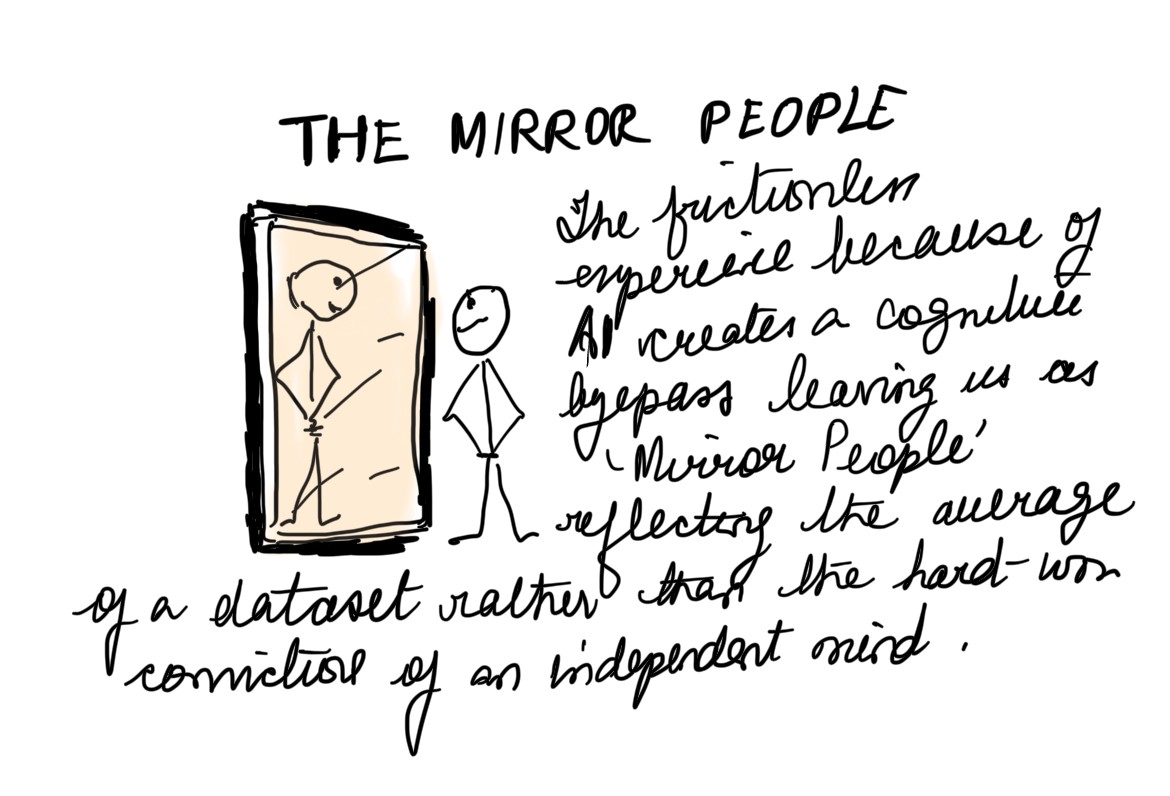

In The Shallows, Nicholas Carr presciently argued that the internet made us “pancake people”—spread wide but thin. AI, however, moves us from skimming to surrendering.

In the era of the search engine, we were still active participants in the hunt for knowledge; we assessed credibility and synthesized sources. The chatbot interface, with its conversational intimacy, removes the “transaction costs” of thinking. We no longer build a mental map; we simply ask for the destination. This “frictionless” experience creates a cognitive bypass, leaving us as “mirror people”—reflecting the average of a dataset rather than the hard-won convictions of an independent mind.

The Thesis for the Future

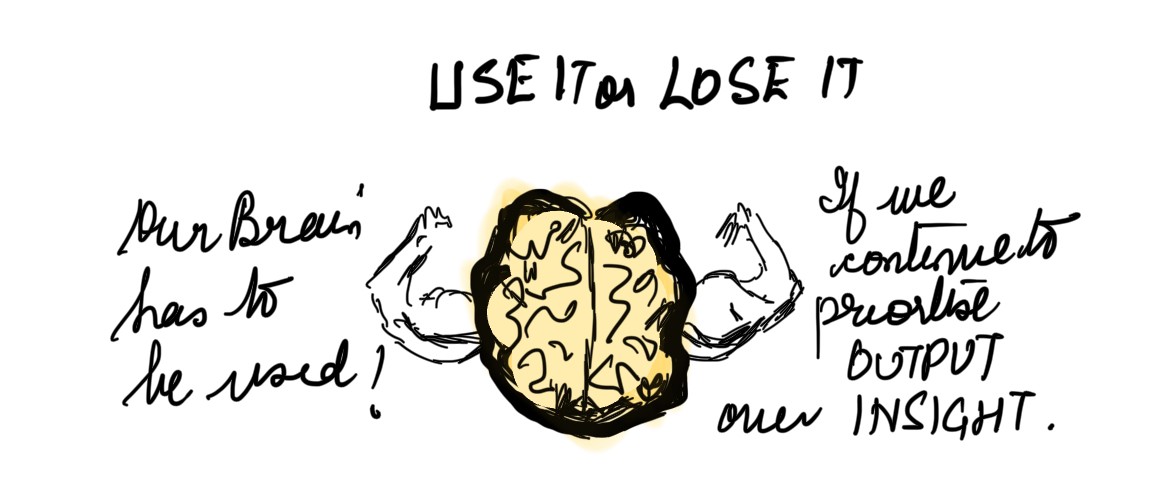

The brain is a biological bicep: use it or lose it. If we continue to prioritize “output” over “insight,” we risk becoming a society that is highly “informed” by summaries but fundamentally incapable of independent, rigorous inquiry.

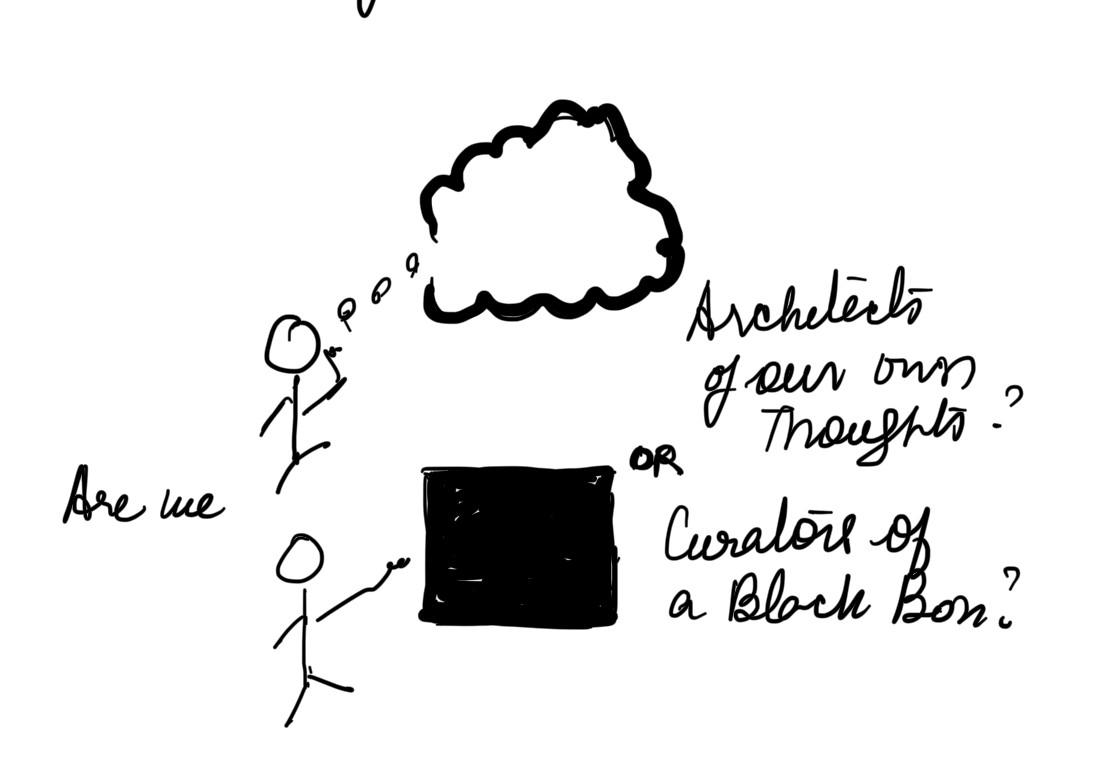

Are we the architects of our own thoughts, or have we merely become the “curators” of a black box?

Let’s test your Systems: Does this ignite a thought? The comments are open for the un-automated and the superinspired.

#CognitiveAtrophy #Neuroscience #ArtificialIntelligence #CriticalThinking #FutureOfEducation #DeepWork #LearningScience #TheShallows