- Have any questions?

- info@shradhakanwar.com

From Information Overload to Intentional Intelligence

The University Beyond the Degree: From “Knowing” to “Becoming”

March 18, 2026The Great Re-Imagineering

March 18, 2026From Information Overload to Intentional Intelligence

Creating cognitive equilibrium in the time of AI is less about resisting technology and more about redesigning our habits of thinking, learning, and leading. In higher education and knowledge work, this means using AI as a cognitive partner that reduces overload while protecting autonomy, judgment, and critical reflection.

Cognitive equilibrium, in Piagetian terms, is the dynamic balance between assimilation and accommodation as we integrate new information into existing schemas. In the AI-saturated environment, that equilibrium is constantly perturbed by volume, velocity, and verisimilitude of machine-generated content.

Recent work on the “cognitive paradox of AI in education” shows that AI can simultaneously act as a cognitive amplifier and inhibitor: it can scaffold higher-order thinking, but also induce over-reliance, fixation, and reduced creative confidence if uncritically used. This calls for intentional design of AI use that sustains metacognition, self-determination (autonomy, competence, relatedness), and human judgment rather than outsourcing them.

Practices for equilibrium

Creating cognitive equilibrium in the time of AI may require three deliberate practices:

Curated cognitive load:

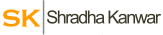

Modern cognitive science highlights a growing tension between our evolutionary biology and the relentless “firehose” of the digital age.

In his foundational work, The Shallows: What the Internet Is Doing to Our Brains, Nicholas Carr argues that the rapid-fire, fragmented nature of online information is physically rewiring our neural pathways, eroding our capacity for the “deep thought” necessary for complex problem-solving.

This neurological strain often manifests as what Dr. David Lewis famously termed “Information Fatigue Syndrome” (IFS), a psychological state where the sheer volume of data becomes a barrier rather than an asset. When the brain is pushed past its processing limits, the “Information Overload Syndrome” sets in, leading to paralyzed decision-making, increased anxiety, and a significant decline in creative output.

Therefore, it is important to use AI to structure complexity, not to erase it. Adaptive and neuro-adaptive systems can reduce extraneous cognitive load while preserving the mental effort required for deep understanding and transfer, effectively transforming a chaotic information environment into a curated cognitive load that challenges the mind without overwhelming it. By strategically filtering noise while maintaining “desirable difficulties,” these systems ensure that the learner remains in the optimal zone for intellectual growth and long-term retention.

AI-augmented metacognition:

Reclaiming cognitive agency in an era of digital distraction requires a deliberate shift toward intentionality, moving away from “autopilot” consumption and toward structured frameworks for focus.

A central pillar of this transition is Rob Walker’s The Art of Noticing, which provides practical exercises designed to sharpen observation and break the cycle of mindless scrolling. To manage the sheer volume of data we encounter, Tiago Forte introduces the Building a Second Brain methodology, an approach that externalizes information storage to a digital system, thereby freeing the biological mind to focus on creative intent rather than mere retention.

This ability to maintain concentration is what Cal Newport defines in Deep Work as a modern “superpower,” arguing that in a noisy economy, the most valuable professionals are those who can produce high-quality output by filtering out the superficial and leaning into undistracted, high-intensity focus.

We need to treat AI as a thought partner that exposes blind spots, surfaces counter-arguments, and supports reflective judgment, rather than as an answer engine that short-circuits struggle, effectively evolving our internal reasoning into a state of AI-augmented metacognition. By using these systems to externalize and audit our own thinking processes, we move beyond simple information retrieval toward a higher-order awareness of how we learn, decide, and correct our own intellectual biases.

Ethical and epistemic literacy:

As we move toward a future defined by Intentional Intelligence, the definition of human capability is shifting from the ability to store information to the mastery of knowing where to find and how to apply it. This evolution requires a rigorous defense of human agency against the firehose of automated data.

In The Age of AI, authors Henry Kissinger, Eric Schmidt, and Daniel Huttenlocher argue that without clear human intent to guide these systems, we risk entering a destabilizing era of automated misinformation. This challenge is compounded by what scholars Matthew Crawford and Michael Goldhaber describe as , where they posit that in a world of infinite information, human attention—not data—is the primary scarce resource.

Navigating this landscape requires a grounded approach, such as the one proposed by David M. Levy in Mindful Tech, which advocates for bringing contemplative practices into our digital interactions to ensure our technology serves our values rather than eroding th

In the Attention Economy, where information is infinite but focus is scarce, epistemic literacy serves as the essential filter for determining which data is worthy of that limited attention. This synergy ensures that we don’t just consume content efficiently, but critically evaluate its truth-value and origin before allowing it to shape our knowledge. We should embed discussions of bias, transparency, data ethics, and explainability into how learners and leaders engage with AI, so that cognitive equilibrium includes moral and civic orientation, not just efficiency.

Creating cognitive equilibrium in the time of AI is ultimately a design question: How do we architect curricula, organisations, and personal practices so that AI reduces noise but does not erode the very capacities that make us human—discernment, judgment, and meaning-making?

These are the questions that will lead our tomorrow!